- Welcome to the Docs

- Get Docker

- Get Started

- Docker ID

- Docker Engine

- User Guide

- Introduction

- Work with images

- Docker storage drivers

- Network configuration

- Apply custom metadata

- Admin Guide

- Configuring and running Docker

- Automatically start containers

- Limit a container's resources

- Keep containers alive during daemon downtime

- Control and configure Docker with systemd

- Format command and log output

- Run a local registry mirror

- Logging

- PowerShell DSC Usage

- Using Ansible

- Using Chef

- Using Puppet

- Using Supervisor with Docker

- Runtime metrics

- Link via an ambassador container

- Troubleshoot Docker Engine

- Manage a swarm

- Swarm mode overview

- Swarm mode key concepts

- Get started with swarm mode

- How swarm mode works

- Run Docker Engine in swarm mode

- Join nodes to a swarm

- Manage nodes in a swarm

- Deploy services to a swarm

- Manage sensitive data with Docker secrets

- Lock your swarm

- Attach services to an overlay network

- Swarm administration guide

- Raft consensus in swarm mode

- Secure Engine

- Extend Engine

- Dockerize an application

- Dockerfile reference

- Docker run reference

- Use the Docker command line

- Daemon CLI reference (dockerd)

- Engine CLI reference

- docker (base command)

- docker attach

- docker build

- docker checkpoint *

- docker commit

- docker container *

- docker container

- docker container attach

- docker container commit

- docker container cp

- docker container create

- docker container diff

- docker container exec

- docker container export

- docker container inspect

- docker container kill

- docker container logs

- docker container ls

- docker container pause

- docker container port

- docker container prune

- docker container rename

- docker container restart

- docker container rm

- docker container run

- docker container start

- docker container stats

- docker container stop

- docker container top

- docker container unpause

- docker container update

- docker container wait

- docker cp

- docker create

- docker deploy

- docker diff

- docker events

- docker exec

- docker export

- docker history

- docker image *

- docker images

- docker import

- docker info

- docker inspect

- docker kill

- docker load

- docker login

- docker logout

- docker logs

- docker network *

- docker node *

- docker pause

- docker plugin *

- docker port

- docker ps

- docker pull

- docker push

- docker rename

- docker restart

- docker rm

- docker rmi

- docker run

- docker save

- docker search

- docker secret *

- docker service *

- docker stack *

- docker start

- docker stats

- docker stop

- docker swarm *

- docker system *

- docker tag

- docker top

- docker unpause

- docker update

- docker version

- docker volume *

- docker wait

- Engine API

- User Guide

- Docker Compose

- Overview of Docker Compose

- Install Compose

- Getting Started

- Docker Stacks and Distributed Application Bundles

- Using Compose with Swarm

- Quickstart: Compose and Django

- Quickstart: Compose and Rails

- Quickstart: Compose and WordPress

- Environment file

- Environment variables in Compose

- Extending Services in Compose

- Networking in Compose

- Using Compose in Production

- Compose File Reference

- Command-line Reference

- Command-line Completion

- Link Environment Variables

- Controlling startup order

- Frequently Asked Questions

- CS Docker Engine

- Docker Datacenter

- Deploy Datacenter on AWS

- Deploy Datacenter on Linux

- Universal Control Plane 2.0

- Docker Trusted Registry 2.1

- Previous versions

- Universal Control Plane 1.0

- Docker Trusted Registry 2.0

- Docker Cloud

- About Docker Cloud

- Docker Cloud Settings and Docker ID

- Organizations and Teams

- Getting Started

- Introducing Docker Cloud

- Link to your Infrastructure

- Deploy your first node

- Deploy your first service

- Deploy an application

- Introduction to Deploying an app in Docker Cloud

- Set up your environment

- Prepare the application

- Push the image to Docker Cloud's Registry

- Deploy the app as a Docker Cloud service

- Define environment variables

- Scale the service

- View service logs

- Load-balance the service

- Provision a data backend for the service

- Stackfiles for your service

- Data management with Volumes

- Manage Applications

- Add a Deploy to Docker Cloud button

- Automatic container destroy

- Automatic container restart

- Automatic service redeploy

- Create a proxy or load balancer

- Deployment tags

- Manage service stacks

- Publish and expose service or container ports

- Redeploy running services

- Scale your service

- Service API Roles

- Service discovery and links

- Stack YAML reference

- Use triggers

- Work with data volumes

- Manage Builds and Images

- Manage Infrastructure

- Infrastructure Overview

- Container distribution strategies

- Link to Amazon Web Services hosts

- Link to DigitalOcean hosts

- Link to Microsoft Azure hosts

- Link to Packet hosts

- Link to SoftLayer hosts

- SSH into a Docker Cloud-managed node

- Upgrade Docker Engine on a node

- Use the Docker Cloud Agent

- Using Docker Cloud and Packet.net

- Using Docker Cloud on AWS

- Docker Cloud notifications in Slack

- The Docker Cloud CLI

- Known Issues in Docker Cloud

- API reference

- Release Notes

- Docker Hub

- Docker Machine

- Docker Store

- Component Projects

- Docker Swarm

- Swarm Overview

- How to get Swarm

- Evaluate Swarm in a sandbox

- Plan for Swarm in production

- Build a Swarm cluster for production

- Try Swarm at scale

- High availability in Swarm

- Swarm and container networks

- Discovery

- Provision with Machine

- Scheduling

- Overview Docker Swarm with TLS

- Configure Docker Swarm for TLS

- Command line reference

- API response codes

- Docker Swarm API

- Docker Registry

- Registry Overview

- Understanding the Registry

- Deploying a registry server

- Configuring a registry

- Working with notifications

- Recipes

- Reference

- Reference Overview

- HTTP API V2

- Image Manifest V 2, Schema 1

- Image Manifest V 2, Schema 2

- Garbage Collection

- Testing an insecure registry

- Deprecated Features

- Compatibility

- Docker Registry Token Authentication

- Token Authentication Implementation

- Oauth2 Token Authentication

- Token Scope Documentation

- Token Authentication Specification

- Storage Drivers

- Getting help

- Docker Notary

- Docker Swarm

- Open Source at Docker

- Quickstart contribution

- Set up for Engine Development

- FAQ for contributors

- Where to chat or get help

- Style guide for Docker documentation

- About

- Docs archive

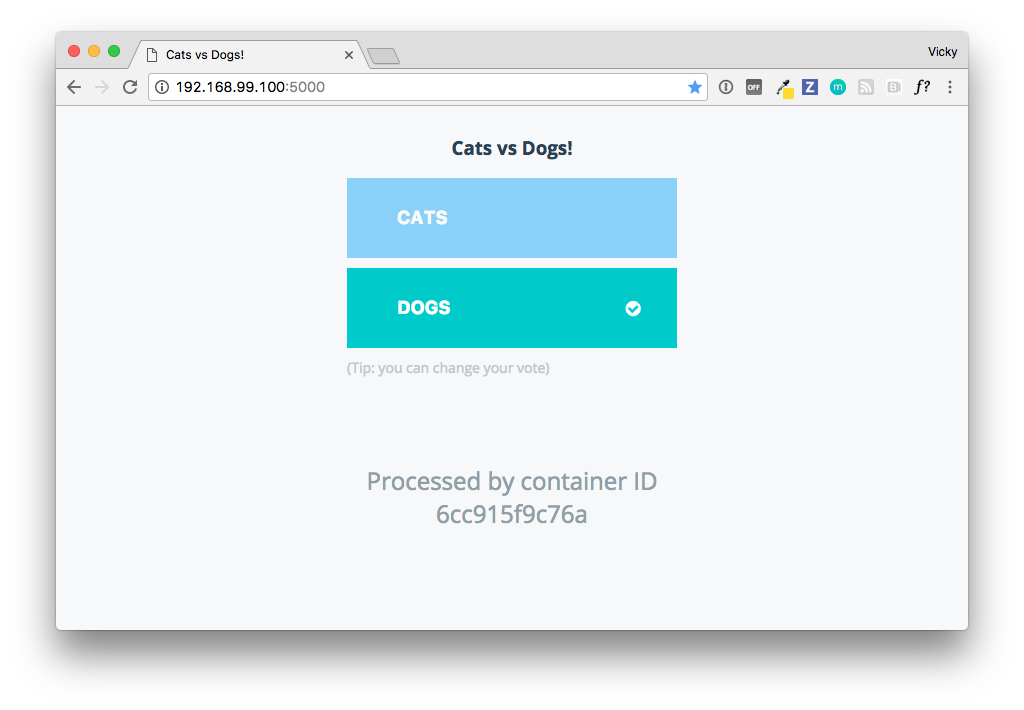

Try it out and vote

Estimated reading time: 2 minutesNow that the app is up and running, let’s try it out.

We will vote for cats and dogs, view the results, and monitor the manager and worker nodes, containers and services on a visualizer.

Vote for cats and dogs

Go to <MANAGER-IP:>5000 in a web browser to view the voting page from a user perspective.

Click on either cats or dogs to vote.

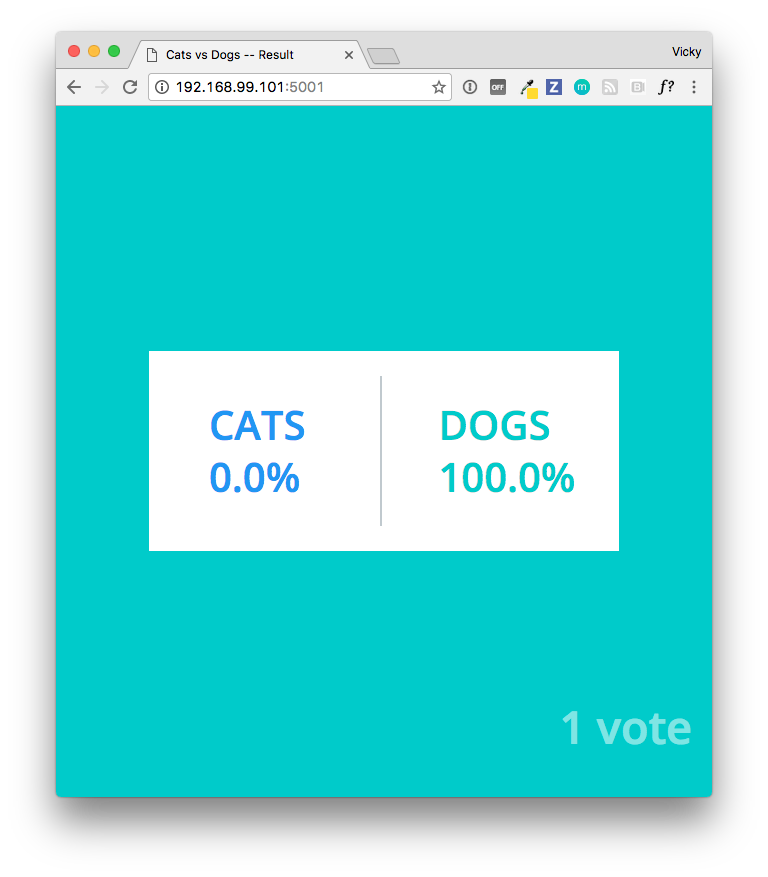

View the results tally

Now, go to <MANAGER-IP:>5001 in a web browser to view the voting results tally, as one might do in the role of poll coordinator. The tally is shown by percentage in the current configuration of the app.

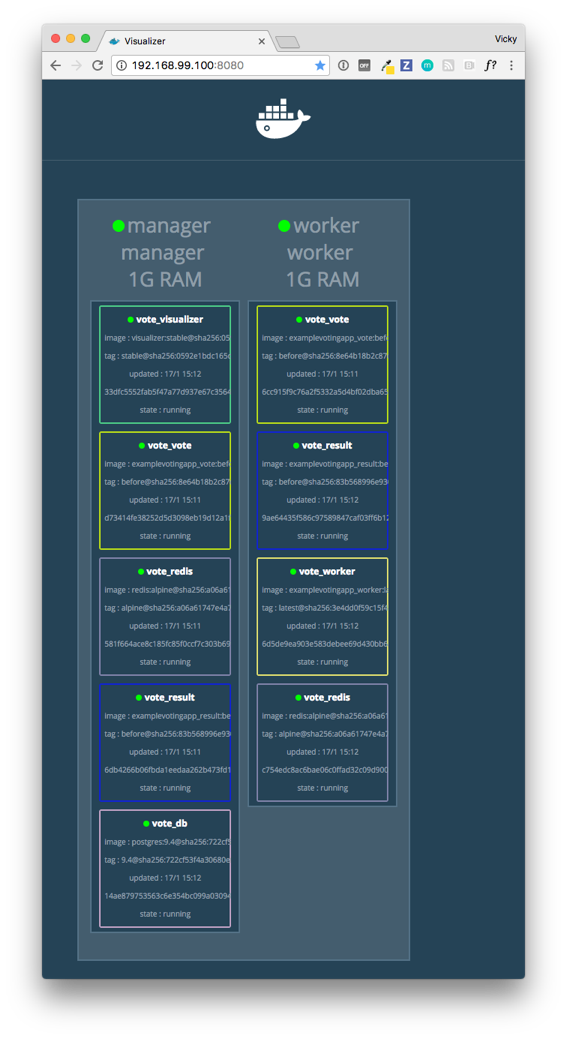

Use the visualizer to monitor the app

Go to <MANAGER-IP:>8080 to get a visual map of how the application is

deployed.

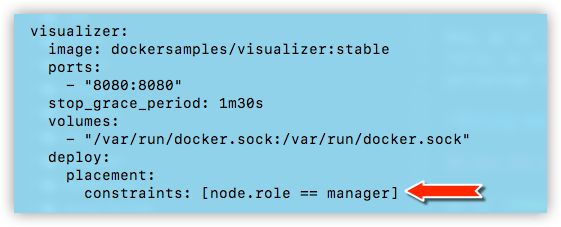

This surfaces some configuration and characteristics from docker-stack.yml, and you can see those strategies in action here. For example:

-

We have two nodes running: a

managerand aworker. -

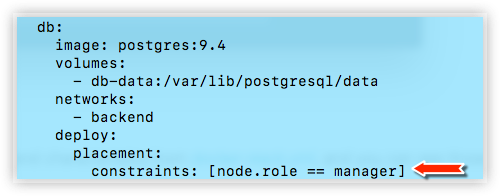

The manager node is running the PostgreSQL container, as configured by setting

[node.role == manager]as a constraint in the deploy key for thedbservice. This service must be constrained to run on the manager in order to work properly.

- The manager node is also running the visualizer itself, as configured by setting

[node.role == manager]as a constraint in the deploy key for thevisualizerservice. This service must be constrained to run on the manager in order to work properly. If you remove the constraint, and it ends up on a worker, the web page display will be blank.

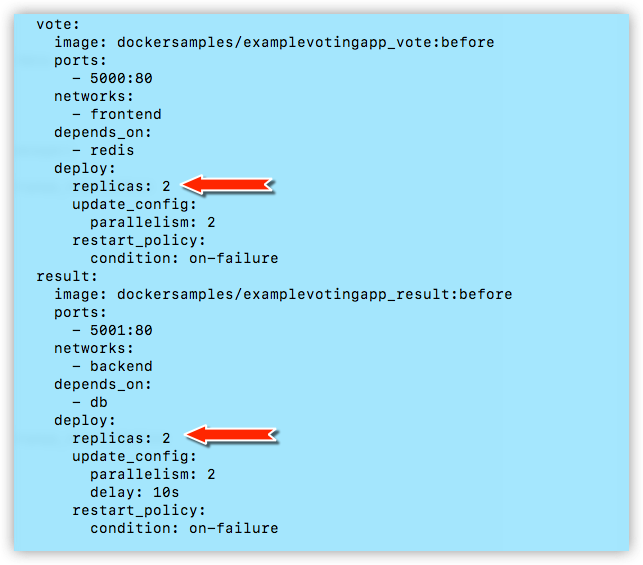

-

Two of the services are replicated:

vote(represented in the visulizer byvote_vote)result(represented in the visulizer byvote_result)

Both of these services are configured as

replicas: 2under thedeploykey. In the current state of this app, one of each is running on a manager and on a worker. However, since neither are explicitly constrained to either node indocker-stack.yml, all or some of these services could be running on either node, depending on workload and re-balancing choices we’ve left to the swarm orchestration.

What’s next?

In the next steps, we’ll customize the app and redploy it.

Feedback? Suggestions? Can't find something in the docs?

Feedback? Suggestions? Can't find something in the docs?Edit this page ● Request docs changes ● Get support

Rate this page: